An Evening with Doug Hubbard: The Failure of Risk Management: Why it's *Still* Broken and How to Fix It

There seems to be two different types of risk managers in the world: those who are perfectly satisfied with the status quo, and those who think current techniques are vague and do more harm than good. Doug Hubbard is firmly in the latter camp. His highly influential and groundbreaking 2009 book titled The Failure of Risk Management: Why it’s Broken and How to Fix It takes readers on a journey through the history of risk, why some methods fail to enable better decision making and – most importantly – how to improve. Since 2009, however, much has happened in the world of forecasting and risk management: the Fukushima Daiichi Nuclear Disaster in 2011, the Deepwater Horizon Offshore Oil Spill in 2019, multiple large data breaches (Equifax, Anthem, Target), and many more. It makes one wonder; in the last 10 years, have we “fixed” risk?

Luckily, we get an answer. A second edition of the book will be released in July 2019, titled The Failure of Risk Management: Why it's *Still* Broken and How to Fix It. On September 10th, 2018, Hubbard treated San Francisco to a preview of the new edition, which includes updated content and his unique analysis on the events of the last decade. Fans of quantitative risk techniques and measurement (yes, we’re out there) also got to play a game that Hubbard calls “The Measurement Challenge,” in which participants attempt to stump him with questions they think are immeasurable.

It was a packed event, with over 200 people from diverse fields and technical backgrounds in attendance in downtown San Francisco. Richard Seiersen, Hubbard’s How to Measure Anything in Cybersecurity Risk co-author, kicked off the evening with a few tales of risk measurement challenges he’s overcome during his many years in the cybersecurity field.

Is it Still Broken?

The first edition of the book used historical examples of failed risk management, including the 2008 credit crisis, the Challenger disaster and natural disasters to demonstrate that the most popular form of risk analysis today (scoring using ordinal scales) is flawed and does not effectively help manage risk. In the 10 years since Hubbard’s first edition was released, quantitative methods, while still not widely adopted, have made inroads in consulting firms and companies around the world. Factor Analysis of Information Risk (FAIR) is an operational risk analysis methodology that shares many of the same approaches and philosophies that Hubbard advocates for and has made signification traction in risk departments in the last decade. One has to ask – is it still broken?

It is. Hubbard pointed to several events since the first edition:

Fukushima Daiichi Nuclear Disaster (2011)

Deepwater Horizon Offshore Oil Spill (2010)

Flint Michigan Water System (2012 to present)

Samsung Galaxy Note 7 (2016)

Amtrak Derailments/collisions (2018)

Multiple large data breaches (Equifax, Anthem, Target)

Risk managers are fighting the good fight in trying to drive better management decisions with risk analysis, but by and large, we are not managing our single greatest risk: how we measure risk.

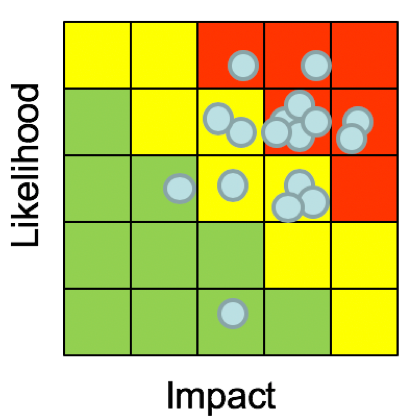

Hubbard further drove the point home and explained that the most popular method of risk analysis, the risk matrix, is fatally flawed. Research by Cox, Bickel and many others discovered that the risk matrix adds errors, rather than reduces errors, in decision making.

Fig 1: Typical risk matrix

See Fig. 1: “Should we spend $X to reduce risk Y or $A to reduce risk B?” It is not clear how to answer this question, using the risk matrix methodology.

How do we fix it? Hubbard elaborated on the solution at length, but the short answer is: math with probabilities. There are tangible examples in the first edition of the book, and will be expanded upon in the second edition.

The Measurement Challenge!

A pervasive problem in business is the belief that some things, especially those that are intangible, cannot be measured. Doug Hubbard has proven, however, that anything can be measured. The technique lies in understanding exactly what measurement is and framing the object under measurement in a way that facilitates measurement. Based on this idea, Hubbard created a game that he likes to do on his website, books and occasionally when he speaks at an event, called The Measurement Challenge. The Measurement Challenge is a simple concept: Hubbard will take questions, concepts, items, or ideas that people perceive to be immeasurable, and he will demonstrate how to measure them. The Measurement Challenge is based on another one of Hubbard’s books, How to Measure Anything: Finding the Value of Intangibles in Business in which simple statistical techniques are described to demonstrate how to measure (literally!) anything.

When all the participants checked into the event that evening, The Measurement Challenge was briefly explained to them, and they were given paper to write down one item they thought was hard or impossible to measure. Some entries actually have been measured before, such as measuring the number of jelly beans in a jar, the number of alien civilizations in the universe and decomposition problems, similar to the number of piano tuners in Chicago. The most interesting ones were things that are intangible, and which is of course, is Hubbard’s specialty.

Measuring intangibles requires a clear definition of what it is you're trying to measure.

It’s useful to keep in mind the clarification chain, described in Hubbard’s book How to Measure Anything: Finding the Value of Intangibles in Business. The clarification chain is summed up as three axioms:

If it matters at all, it is detectable/observable.

If it is detectable, it can be detected as an amount (or range of possible amounts.)

If it can be detected as a range of possible amounts, it can be measured.

All entries were collected, duplicates were combined and tallied up for final voting. The finalist questions were put up on an online voting system for all participants to vote on from their smartphones. There were a diverse number of excellent questions, but two were picked to give plenty of time to delve into the concepts of measurement and how to decompose the problems.

Some interesting questions that weren’t picked:

Measure the capacity for hate

The effectiveness of various company training programs

The value of being a better calibrated estimator

How much does my daughter love me?

The winning questions were:

How much does my dog love me?, and

What is the probable reputation damage to my company resulting from a cyber incident?

Challenge #1: How much does my dog love me?

How much does my dog love me? This is a challenging question, and it combined many other questions that people had asked of a similar theme. There were many questions on love, hate and other emotions, such as: How do I know my daughter loves me? How much does my spouse love me? How can I measure the love between a married couple? How much does my boss hate me? If you can figure out how to measure love, you would also know how to measure hate. Taking that general theme, “How much does my dog love me?” is a good first measurement challenge.

Hubbard read the question, looked up somewhat quizzically and told the person who had asked the question to raise their hand. He asked a counter question: “What do you mean by love?” Most people in the audience, including the person who’d asked the question, were unsure how to answer. Pausing to let the point be made, Hubbard then started to explain how to solve this problem.

He explained that the concept of “love” has many different definitions based on who you are, your cultural differences, age, gender, and many other factors. The definition of love also varies by the object of which you are forming the question around. For example, the definition of love from an animal is very different from the definition of love from a child, which is also very different from the love from a spouse. After explaining, Hubbard asked again: “What do you mean by love from your dog? What does this mean?”

People started throwing out ideas of what it means for a dog to love an individual, naturally using the clarification chain as a mental framework. Observable, detectable behaviors were shouted out, such as:

When I come home from work and my dog is happy to see me. She jumps up on me. This is how I know she loves me.

I observe love from my dog when he cuddles in bed after a long day at work.

Some dogs are service animals and are trained to save lives or assist throughout your day. That could also be a form of love.

Hubbard asked a follow-up question, “Why do you care if your dog loves you?” This is where the idea of measuring “love” started to come into focus for many of the people in the audience. If one is able to clearly define what love is, able to articulate why one personally cares, and frame the measurement as what can be observed, meaningful measurements can be made.

The last question Hubbard asked was, “What do you already know about this measurement problem?” If one’s idea of love from a dog is welcome greetings, one can measure how many times the dog jumps up, or some other activity that is directly observable. In the service animal example, what would we observe that would tell us that the dog is doing its job? Is it a number of activities per day that that the dog is able to complete successfully? Would it be passing certain training milestones so that you would know that the dog can save your life when it's needed? If your definition of love falls within those parameters, it should be fairly easy to build measurements around what you can observe.

Challenge #2: What is the probable reputation damage to my company resulting from a cyber incident?

The next question was by far one of the most popular questions that was asked. This is a very interesting problem, because some people would consider this to be an open and shut case. Reputation damage has been measured many times by many people and the techniques are fairly common knowledge. However, many risk managers proudly exclaim that reputation damage simply cannot be measured for various reasons: the tools don't exist, it’s too intangible, or that it's not possible to inventory all the various areas a business has reputation, as an asset to lose.

Just like the first question, he asked the person that posed this problem to raise their hand and he asked a series of counter questions, designed to probe exactly what they mean by “reputation,” what could you observe that would tell you that have good reputation, and as a counter question, what could you observe that would tell you that you have a bad reputation?

Framing it in the form of observables started an avalanche of responses from audience. One person chimed in saying that if a company had a good reputation, it would lead to customers’ trust and sales might increase. Another person added that an indicator of a bad reputation could be a sharp decrease in sales. The audience got the point quickly. Many other ideas were brought up:

A drop in stock price, which would be a measurement of shareholder trust/satisfaction.

A bad reputation may lead to high interest rates when borrowing money.

Inability to retain and recruit talent.

Increase in public relations costs.

Many more examples, and even more sector specific examples, were given by the audience. By the end of this exercise, the audience was convinced that reputation could indeed be measured, as well as many other intangibles.

Further Reading

Hubbard previewed his new book at the event and everyone in the audience had a great time trying to stump him with measurement challenges, even if it proved to be futile. These are all skills that can be learned. Check out the links below for further reading.

Douglas Hubbard

The Failure of Risk Management, by Douglas Hubbard

The Failure of Risk Management: Why it’s Broken and How to Fix It | Current, First edition published in 2009

The Failure of Risk Management: Why it's *Still* Broken and How to Fix It | 2nd edition, Due to be released in July, 2019

How to Measure Anything, by Douglas Hubbard

How to Measure Anything: Finding the Value of Intangibles in Business | 3rd edition

How to Measure Anything in Cyber Security Risk | with co-author Richard Seiersen

More Information of Risk Matrices

Bickel et al. “The Risk of Using Risk Matrices”, Society of Petroleum Engineers, 2014

Tony Cox “What’s wrong with Risk Matrices”